Adaptive study designs

Background

- development of a new drugà huge expenses are involved à long time-consuming process.

- success rate of may not be reflected with respect to increased expenditure in the clinical trialsà the feasibility of investing in a clinical trial does not appear to be convincing.

- Thus the pharmaceutical companies do not appear enthusiastic in conducting a new clinical trial. This has hampered the process of development of new drugs by the pharmaceutical companies.

- Noting the stagnation in the development of innovative products à US FDA issued a statement in 2004 known as Critical Path Initiative (CPI) raising an alarm in the reduced number of innovative medical products submitted for FDA approval

- The pharmaceutical industries have realised that the conventional study design (those with a fixed sample size that do not use adaptive elements) of the trial does not allow the flexibility to incorporate these changes in the trial.

- To keep in pace with the progress in the scientific research and development of a new drug, a need for improved and innovative testing methods was felt and accordingly measures were developed.

FDA in 2004 in its CPI recommended the use of Adaptive Study Designs and use of Bayesian approach in the clinical research and development. Similarly, in 2006, the European Medicines Agency (EMEA) issued a paper regarding the flexible or adaptive study designs in the new drug development.

Defining adaptive study design

“study that includes a prospectively planned opportunity for modification of one or more specified aspects of the study design and hypotheses based on analysis of data (usually interim data) from subjects in the study.”

Without undermining the validity and integrity of the trial.

- term ‘prospective’ à means that the adaptation was pre-planned after the study has been started and depends on the data of the study.

- This means that the adaptation can be introduced after the study has started if the blinded state of the personnel involved in the analysis of the data is maintained when the modification plan is proposed. These revisions raise major concerns about the study integrity (i.e. the potential to introduce bias). In contrast the revisions introduced after the interim analysis of the blinded data even by the same personnel do not introduce statistical bias.

- It is of importance that the integrity and the validity of the trial is not undermined. The main purpose of the ASD is to make the trial more flexible, efficient and fast. Therefore, these trials are also known as ‘flexible designs’.

They are also known as Bayesian adaptive designs owing to the use of Bayesian approach for the trial design. (Bayesian analysis is a statistical procedure which endeavours to estimate parameters of an underlying distribution based on the observed distribution.)

What is not ASD

The revisions based on the information obtained entirely from sources outside the specific study are not considered as ASD.

It is to be noted that the changes done in the study design that occur after an interim analysis of the unblinded study data and which were not prospectively planned are not a part of the ASD.

Types of adaptations

- Prospective (design) – adaptations are envisioned and approved in the beginning of the trial.

- Concurrent (ad hoc) – changes could not be envisioned in the beginning but their need becomes apparent as the trial continues.

- Retrospective – changes in the statistical analysis plan made prior to the data unblinding.

Modifications that can be prospectively planned include examples like

| Trial procedures | Statistical procedure |

| Eligibility criteria | Randomization |

| Study dose – therapy regimen | Study design |

| Duration of treatment | Study hypothesis |

| Study endpoints | Sample size |

| Lab testing procedures | Data monitoring |

| Diagnostic procedures | Interim analysis |

| Concomitant treatments used | Statistical analysis plan/Methods for data analysis |

| Planned schedule of the patient evaluations for the data collection (eg. number of the intermediate time points, timing of the last patient observation and duration of the patient study participation) | |

| Criteria for evaluation and assessment of clinical responses |

Well understood ASD with valid approaches to implementation

- Adaptation of Study Eligibility Criteria Based on Analyses of Pre-treatment (Baseline) Data

While conducting a trial it may be noticed that the patients enrolled in the study might not be the expected population which was supposed to be enrolled. Thus, examining the baseline characteristics at any time during the study does not introduce statistical bias as long as the treatment assignment of the patients remains unblinded. However, there is a possible risk of impairment of the study result when the study population changes in the middle of the result.

- Adaptations to Maintain Study Power Based on Blinded Interim Analyses of Aggregate Data

A blinded analysis of the overall event rate can be compared to the assumptions planned in the study design. This does not introduce statistical bias. If the event rate is found to be lower than the initial assumption, the study will be underpowered. To increase the study power the study sample size can be increased, or the duration of the study can be increased.

- Adaptations based on the interim results of an outcome unrelated to efficacy

In a study the incidence of SAE effect may be found to be high in a particular group in the interim analysis. Accordingly, the group in which the toxicity is observed is appropriate the group can be dropped which can be done in a ASD. For eg. a ADR is observed with a particular dose in a dose response study.

- Adaptations Using Group Sequential Methods and Unblinded Analyses for Early Study Termination Because of Either Lack of Benefit or Demonstrated Efficacy

- Adaptations based on the Data Analysis Plan not dependent on within study, between group outcome differences.

The prospective statistical analysis plan should be prospectively written carefully and completely. After blinded inspection of data the SAP can be updated regarding the appropriate data transformations.

- Adaptation for the primary endpoint’

When the outcome assessment that is preferred as the primary endpoint proves difficult to obtain, a substantial amount of missing data may occur for this assessment.

Multiple adaptations in a single study increase complexity and difficult in planning the study, with increased difficulty in interpreting the study result.

Types of ASD

- Adaptive Randomization Design

- A group sequential design

- A sample size re-estimation design

- Drop the loser design

- Adaptive dose finding design

- Biomarker adaptive design

- Adaptive treatment switching design

- Hypothesis adaptive design

- Adaptive seamless phase II/III trial design

- Multiple adaptive design

Classification according to 4 rules

| Allocation rule How subjects will be allocated to the different arms Comprises – response adaptive randomization and covariate adaptive allocation |

| Sampling rule How many subjects will be sampled at next stage Comprises – sample re-estimation and drop the loser design |

| Stopping rule When to stop the trial Comprises – Group sequential design and adaptive treatment switch design |

| Decision rule Changes not considered in above the three categories Comprises hypothesis adaptive design, change the primary endpoint or statistical method or patient population design. |

| Fifth rule Comprises multiple adaptions and seamless phase II/III designs |

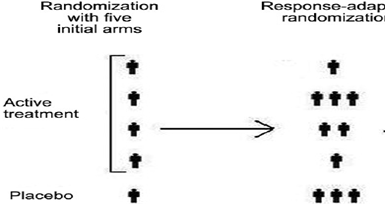

Adaptive randomization

Adaptive randomization is a form of treatment allocation in which the probability of patient assignment to any particular treatment group of the study is adjusted based on repeated comparative analyses of the accumulated outcome responses of patients previously enrolled (often called outcome dependent randomization, for example, the play the winner approach).

The probability of success of the treatment increases and the subjects are not wasted on non-efficient doses.

Care should be taken that due to adaptive randomization an imbalance among the groups may be introduced which will ultimately lead to inaccurate interpretation of the results.

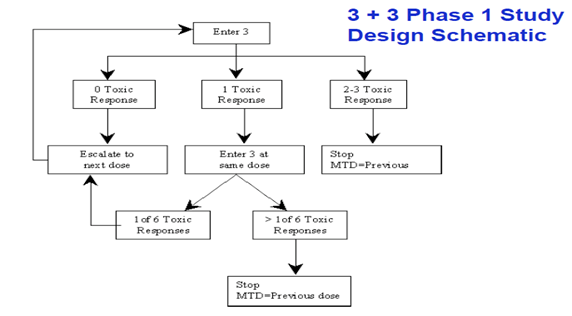

Group sequential design

A trial can be stopped prematurely if there are safety or efficacy issues. Already in use in oncology studies. Familiar eg. is the “3+3” phase I trial design for finding a MTD.

In a 3+3 trial, three patients start at a given dose and, if no dose limiting toxic effects are seen, three more patients are added to the trial at a higher dose. If there is one instance of limiting toxicity in the first group, three more patients are added at the same dose. If two (or all three) in any cohort show dose limiting toxicity, the next lower dose is declared to be the maximum tolerated.

- enables early termination when no beneficial treatment effect is observed.

- They could increase the size of the trial and introduce problems in the control of type I error.

Stopping rules: A threshold for the number of subjects randomized and at least one of the following:

- Utility rules: The difference in response rate between the most responsive group and the control group exceeds a threshold and the corresponding two-sided 95% naive confidence interval lower bound exceeds a threshold.

- Futility rules: The difference in response rate between the most responsive group and the control is lower than a threshold and the corresponding two-sided 90% naive confidence interval upper bound is lower a threshold.

Sample size re-estimation

A sample size can be modified or reestimated in this type of design based on the observed data at interim.

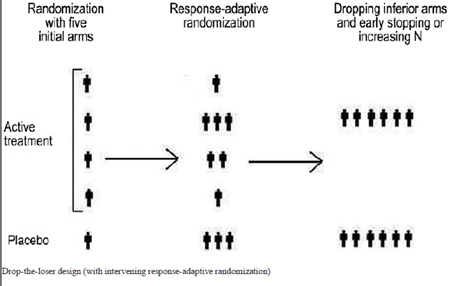

Drop the loser

- In this design the subjects detected to have received inferior treatments at the interim analysis can be dropped out.

- Based on the findings of the interim analysis, additional treatment arms can also be added at this stage.

- useful in Phase II clinical development, especially when there are uncertainties regarding the dose levels. However, the statistical power drops when the losers are dropped and only winners are selected.

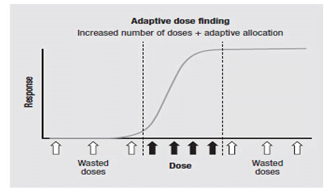

Adaptive dose finding design

In early phase clinical development to identify the minimum effective dose and the maximum tolerable dose, which is used to determine the dose level for the next phase clinical trials.

Biomarker adaptive design

Modification of the biomarkers associated with the disease. A biomarker adaptive design can be used to select the right patient population, identify natural course of disease, early detection of disease and to help in developing personalized medicine.

The relationship between the biomarker and the clinical outcome may not always be easily predictable.

Adaptive treatment switching design

In treatment switching design, shifting the patient from one treatment option to other is allowed, if there are concerns about the safety or efficacy. But, in such type of trials estimation of survival rate will become very difficult, if the disease in question has poor prognosis. A high percentage of subjects may switch treatments due to disease progression, leading to confusion.

Hypothesis adaptive design

design that allows modifications or changes in hypotheses based on interim analysis results.

often finalized before database lock or prior to data unblinding. Some examples include the switch from a superiority hypothesis to a non-inferiority hypothesis and the switch between the primary study endpoint and the secondary endpoints.

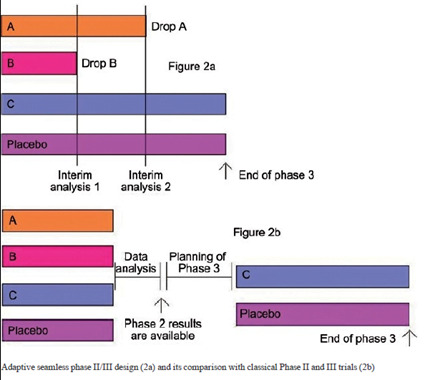

Adaptive seamless phase II/III design

- Combination of phase IIB (to study efficacy) and phase III (confirmatory) in a single trial.

- transition from phase II to phase III happens without a pause which saves a considerable amount of time for the drug development.

- Two types of seamless (smoothly continuous) – operationally seamless, which simply seeks to exploit the saving in time; And inferentially seamless, which uses the new statistical methods to benefit from combining the relevant data from the Phase II part with the Phase III data in the final analysis.

Though the validity and efficacy has been challenged. Moreover, it is not clear how to perform a combined analysis if the study objectives (or endpoints) are similar but different at different phases.

Multiple adaptive designs

Combining of any of the above adaptive designs. Common drawback is difficulty for acquiring a statistical inference. A single adaptive design is itself difficult to analyse while combining the adaptations further complicates the statistical analysis.

Advantage of the ASD over CSD

(1) more efficiently provides the same information,

(2) increases the likelihood of success on the study objective, or

(3) yields improved understanding of the treatment’s effect (e.g., better estimates of the dose-response relationship or subgroup effects, which may also lead to more efficient subsequent studies).

CSD take into the considerations the uncertainties that may increase the likelihood of the study success, as a study may fail to achieve its goal as a result of pre-study inaccurate estimates or assumptions.

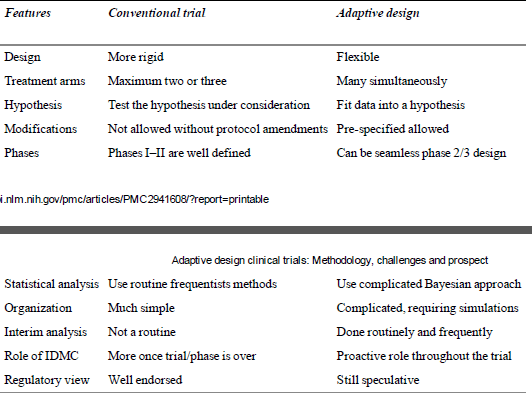

Comparison between CSD and ASD

Concerns associated with use of ASD in drug development

- Potential to increase the chance of erroneous positive conclusions (Type 1 error). It is difficult to control the type I error.

- Positive study results that are difficult to interpret.

- Complex ASD will require more planning with longer lead times between the planning and initiation of the study.

- Major adaptations may result in ultimately a completely different trial which might be unable to answer the clinical questions which the original trial intended to answer.

- Adaptations in the inclusion – exclusion criteria may result in incorporation of a totally different population.

- ASD use Bayesian statistical approach which itself is very complex.

- ASD require computer-based simulations of clinical trials to develop the design and protocol which requires more work force.

- ASD are difficult to understand and pose a challenge for the CROs and EC as their understanding about ASDs is limited.

- Lack of uniformity in the definition of ASD by the regulatory bodies, as only FDA and EMEA have defined them. DCGI has yet to lay a draft on the view over the ASD.

- Use of Bayesian approach is a compulsion rather than a choice, which is considered by many as a non-standard method.

- If flaws in the design of a study introduce a small bias, the increase in the sample size might result in increase in the bias, which is ultimately magnified.

Ethical???

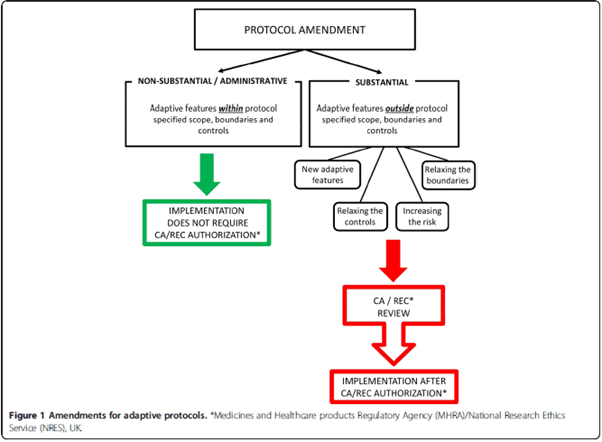

Changes outside the predefined scope constitute an amendment and require regulatory/EC approval.